Neural Graphics: AI Takes Over the Rendering Pipeline

For decades, rendering graphics followed a predictable path: artists created assets, programmers wrote shaders, and GPUs processed triangles. At GDC 2026, Arm demonstrated that this paradigm is about to be upended by neural graphics—techniques that use machine learning to generate, upscale, and interpolate images directly.

Neural Graphics: AI Takes Over the Rendering Pipeline

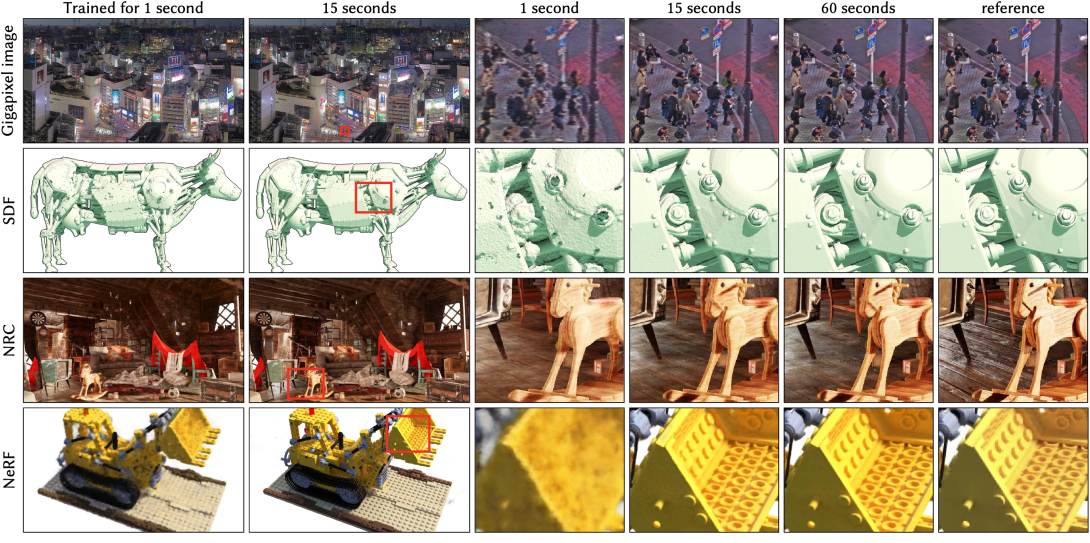

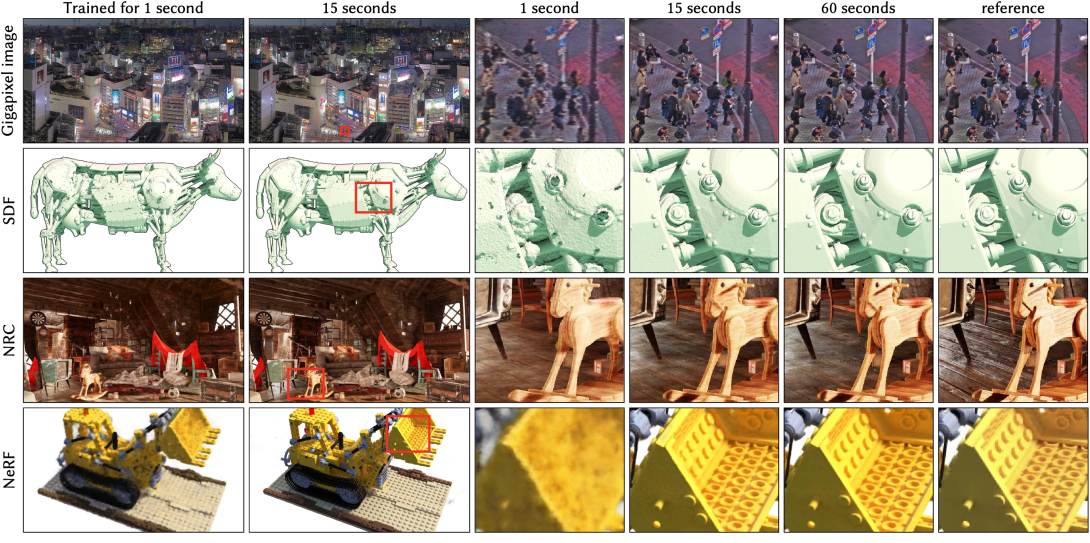

Arm’s Neural Frame Rate Upscaling (NFRU) represents a breakthrough for mobile gaming. The technology uses neural inference to synthesize intermediate frames between traditionally rendered ones, effectively doubling perceived frame rates without doubling the GPU workload. When scene complexity increases and frame time becomes the bottleneck, NFRU allows the GPU to skip rendering entire frames and instead generate them using neural networks that cost a fraction of the computational power.

This isn’t theoretical. Arm showcased NFRU running inside Project Buzz, a production-quality reference game developed in collaboration with Sumo Digital. The demonstration proved that neural techniques can coexist with advanced rendering features like ray-traced lighting and complex shading within mobile power budgets. For developers targeting high-end mobile experiences, this changes the equation entirely.

The underlying architecture matters. Arm is integrating dedicated neural accelerators directly into future GPUs, enabling machine learning workloads to run alongside traditional graphics tasks without interfering. This tight coupling allows developers to replace compute shaders with neural accelerator dispatches using familiar engine workflows—Unreal Engine plugins and Vulkan extensions are already available through Arm’s Neural Graphics Development Kit.

Neural Super Sampling (NSS) preceded NFRU, replacing traditional shader-based upscaling with lightweight kernel-prediction networks that deliver higher image quality while reducing GPU load. Together, these techniques promise “console-class visual quality on mobile within power budget”—a phrase that would have seemed fantastical just a few years ago.

For developers, the message from Arm is urgent: “The studios that build intuition around frame structuring, motion data, and neural scheduling today will be the ones ready when this hardware becomes mainstream”. Waiting until tools are broadly available compresses integration timelines and limits how deeply teams understand these techniques. Neural graphics aren’t future experiments; they’re becoming foundational components of modern rendering pipelines.

Leave a Reply